If you manage a growing universe of contracts, “AI” promises to read everything, extract every clause, and answer every question—instantly. The reality is both better and more practical: modern systems do make contracts searchable, structured, and alert-ready, but only when you pair the right models with the right workflows, data controls, and success metrics. This article separates signal from noise and gives you a concrete, two-week proof-of-concept (POC) plan to evaluate vendors with confidence.

A quick glossary (no hand-waving)

OCR (Optical Character Recognition).

Turns pixels into text. Quality depends on scan resolution, fonts, language packs, and page artifacts (stamps, signatures, redlines). OCR is the foundation—bad OCR equals bad everything.

NLP (Traditional Natural Language Processing).

Rule-based and statistical techniques (regexes, CRFs, SVMs) to detect entities, dates, parties, and clause headers. Fast and predictable for narrow tasks; brittle when contracts deviate from expected patterns.

LLM (Large Language Model).

Neural models that understand and generate language. Excellent for clause classification, field extraction in messy layouts, and drafting summaries. Must be wrapped with guardrails, evaluation, and privacy controls.

RAG (Retrieval-Augmented Generation).

Instead of asking an LLM to “remember,” retrieve the relevant pages/clauses and let the model answer with citations. Reduces hallucinations and gives reviewers anchors.

HITL (Human-in-the-Loop).

People review a sample (or 100%) of predictions, correct errors, and feed improvements back into the system. This is quality control and risk management—not a failure of AI, but a design requirement.

What AI for contracts can (and cannot) do today

Can do reliably

-

Identify standard clause families (confidentiality, governing law, assignment, renewals).

-

Extract common fields (effective date, term, notice period, parties, signatures).

-

Normalize values (e.g., “thirty (30) days” → 30 days) and map them to structured fields.

-

Summarize key risks and obligations with citations to the source span.

-

Trigger reminders and tasks based on extracted dates/thresholds.

Still hard or variable

-

Complex, negotiated language that hides obligations across multiple sections (e.g., CPI formulas split across exhibits).

-

Low-quality scans, faxes, and documents with heavy track-changes or watermarks.

-

Multilingual packs mixing languages on the same page; niche jurisdictions with uncommon terms.

-

Table extraction when the table is an image, rotated, or split across pages.

Real-world success comes from designing for the hard parts: better OCR pipelines, careful evaluation, and HITL review queues that keep accuracy—and trust—high.

Evaluation criteria that actually predict success

1) Accuracy you can defend: precision, recall, and the dataset

Ask vendors for precision/recall/F1 on your documents, not marketing samples. Define the unit of truth (per-field, per-clause, per-document) and insist on:

-

A stratified test set representing your reality: legacy scans, modern PDFs, different languages, and negotiated vs. template agreements.

-

A confusion analysis (what was misclassified as what) and error taxonomy (date misread, unit normalization error, clause missed, wrong page).

-

Inter-rater agreement between two human reviewers on the ground truth; otherwise you chase model “errors” that are actually annotation disagreements.

Tip: Measure both recall (did it find every renewal clause?) and precision (are the ones it found correct?). Missing one auto-renewal may be worse than flagging two false positives.

2) Coverage and robustness

-

Clause taxonomy fit. Can you define custom fields/clauses (e.g., “IP indemnity cap” or “Most Favored Customer”)?

-

Layout variance. Performance on contracts with unusual headers, dense schedules, or embedded images.

-

Redlines & track changes. Can the system ignore markup or does it “read” deletions as if they’re active text?

3) Explainability and traceability

-

Span highlighting for every extracted field (show me the words that caused the prediction).

-

Confidence scores that correlate with actual correctness (low confidence → automatic HITL routing).

-

Versioned models and immutable logs: when a value changes due to a model update or a human correction, you must be able to prove who/what changed it and when.

4) Security & privacy by default

-

Data residency options (e.g., EU/Germany hosting) and clear sub-processor disclosures.

-

Encryption in transit and at rest, key management, least-privilege access to production data.

-

RBAC + SSO/MFA to prevent oversharing and enforce need-to-know.

-

PII handling: masking/redaction pipelines; the ability to process sensitive documents without vendor retention.

-

Logging & retention: exportable, tamper-evident logs and configurable retention/deletion schedules.

5) Workflow fit and integrations

-

Queue design: Can you sort by risk, confidence, or due date?

-

E-signature/DMS/CRM/ERP connectors: Pull metadata from CRM; push obligations back as tasks; store executed copies with correct permissions.

-

APIs and webhooks: So you can keep a system of record in sync and automate downstream alerts.

6) Cost model and total cost of ownership (TCO)

-

Pricing levers: per document, per page, per user, per compute hour—understand what scales with volume.

-

Hidden costs: annotation effort, re-processing low-quality scans, manual QC for low-confidence items.

-

Value capture: time saved, penalties avoided, renewals captured; build a rough business case with conservative assumptions.

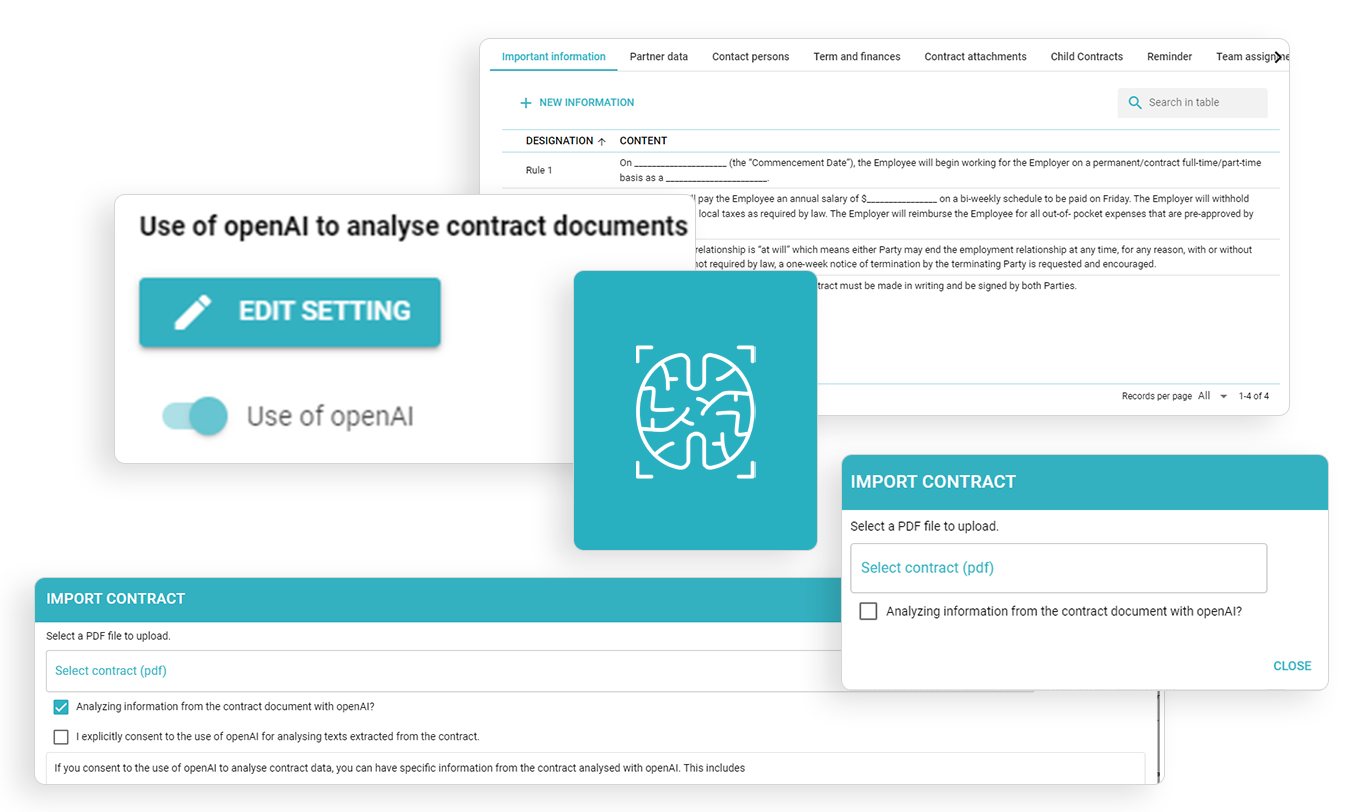

Scan with AI

Scan with AI and place common details automatically.

Available for all versions and possible to disable.

A focused two-week POC plan (use this as your checklist)

Goal: Decide “go/no-go” based on your contracts, with numbers you can present to Legal, Finance, and IT.

Day 0: Define success

-

Use-cases: e.g., detect auto-renewals and notice periods; extract CPI escalators; classify governing law.

-

Acceptance criteria: e.g., ≥ 92% recall and ≥ 90% precision on renewals and notice periods; ≥ 80% reduction in manual search time.

-

Constraints: data residency required? PII masking? Reviewer headcount?

Days 1–3: Build the evaluation pack

-

150–250 documents representing your mix (10–20% low-quality scans; 20–30% negotiated; 10–20% non-English if relevant).

-

Blind ground truth by two reviewers; adjudicate disagreements.

-

Red team set (10–20 docs) with tricky layouts, cross-referenced obligations, and edge cases.

Days 4–7: Run the pipelines

-

Vendor processes the pack using standard settings; no special tuning except allowed integrations (OCR, language packs).

-

You measure per-field and per-clause metrics; compute precision/recall/F1; populate an error taxonomy.

Days 8–10: Human-in-the-loop test

-

Route low-confidence items to reviewers; measure time-to-correct and post-review accuracy.

-

Confirm that corrections are audited and re-used (e.g., active learning or rule updates).

Days 11–12: Security & privacy verification

-

Review data flows, logs, sub-processors, and residency.

-

Confirm RBAC, SSO/MFA, PII masking, retention/deletion options.

-

Export SIEM-ready logs for a sample document journey.

Days 13–14: Executive readout

-

Present metrics, risks, and costs: where the model is reliable, where HITL remains essential, and what ROI looks like (time saved, penalties avoided).

-

Decide go/no-go and, if “go,” the rollout plan (templates first, legacy import later).

Deliverables you should insist on

-

Confusion matrix, precision/recall/F1 per field/clause.

-

Error taxonomy with suggested mitigations.

-

Example span highlights and confidence distributions.

-

Security architecture diagram + DPA/sub-processor list.

-

TCO model with volume assumptions.

Red flags and promises to treat with caution

-

“100% accuracy.” Not a thing. Ask for precision/recall on your dataset with raw counts, not percentages alone.

-

“No training, no review needed.” You still need policy tuning (what counts as a “renewal” for your org?) and HITL for edge cases.

-

“Upload everything first.” Start with a representative sample. Mass ingestion before evaluation creates a digital junk drawer.

-

Opaque models. If a vendor can’t show span highlights, model versioning, or change logs, auditing will be painful.

-

Data retention by default. Improvements shouldn’t require keeping your data unless you explicitly opt in with contractual safeguards.

Designing for explainability (so Legal actually trusts the outputs)

Legal teams don’t want mysticism; they want receipts:

-

Citations everywhere. Every summary sentence should link to the exact clause.

-

Confidence thresholds. For high-stakes fields (e.g., notice period), set a minimum confidence to auto-approve; everything else routes to review.

-

Source-of-truth discipline. The executed PDF remains the canonical record; structured fields are a friendly index, not a replacement.

-

Change control. When a value changes (new model, human edit), store a delta with who/what/when/why.

This is how you move from “AI says so” to “Here’s the clause, here’s who verified it, and here’s when.”

Where AI adds the most value right now

-

Renewal & notice vigilance. Extract notice windows and auto-renewal toggles; assign owners; escalate before deadlines.

-

Commercial term normalization. Pull and standardize payment terms, CPI formulas, discounts, and SLAs across vendors/customers.

-

Risk triage. Flag non-standard liability caps, indemnity scopes, MFN, governing law/jurisdiction mismatches.

-

Legacy unlock. Convert cabinet PDFs into a queryable corpus so teams answer questions in minutes, not days.

All four create measurable wins (penalties avoided, revenue retained, time saved) and form the backbone of a credible ROI story.

The contractSILO approach (brief)

contractSILO pairs OCR + LLM + RAG with HITL review queues, span-level citations, and role-based access. You can host in the EU (including Germany), enforce SSO/MFA, and export audit-ready logs. Integrations cover e-signature and common CRM/ERP systems, with APIs/webhooks for downstream tasks and alerts. The result: structured contract data you can trust—and prove—without sacrificing privacy or control.

AI won’t magically understand every clause in every contract. But with a disciplined evaluation, explainable predictions, and human oversight where it counts, you can turn static PDFs into a living system of obligations, dates, and risks—complete with alerts and dashboards your business can act on. Follow the two-week POC, demand metrics you can defend, and you’ll know exactly where AI earns its keep—and where humans should stay in the loop.

FAQ

1) How much training data do we need for niche clauses?

Surprisingly little if your vendor supports custom rules and active learning. Start with 30–50 well-annotated examples per clause type across diverse layouts. Focus on quality and variety over raw volume, and re-evaluate after each iteration—recall improves fastest when you add examples that represent failure cases.

2) What’s the difference between precision and recall, and which matters more?

Precision measures correctness when the model does predict (fewer false positives). Recall measures how many true items the model found (fewer misses). For obligations like notice periods, recall often matters more—you’d rather review two false positives than miss one real deadline. Track both, plus F1 (their harmonic mean).

3) Can we test with synthetic documents?

Synthetic docs are useful for stress-testing specific patterns (e.g., nested tables, exotic date formats), but they can mask real-world noise. Use them to supplement—not replace—a representative sample of your actual agreements (scans, negotiated edits, multilingual content).

4) How do we handle multilingual contracts?

Ensure your stack supports language-aware OCR and that the LLM/RAG components use the correct language models. Evaluate per language, not just in aggregate. For critical fields, set lower confidence thresholds to trigger HITL review until you build enough examples in each language.

5) When should we use RAG vs. fine-tuning?

Use RAG when answers must be grounded in the original contract with citations (most review tasks). Consider fine-tuning for repetitive classification/extraction tasks where your taxonomy is stable and you need speed at scale. Many teams run both: RAG for explainable Q&A; tuned extractors for high-volume fields.

This article is informational and not legal advice. Work with your counsel and security teams to set requirements appropriate to your industry and jurisdictions.